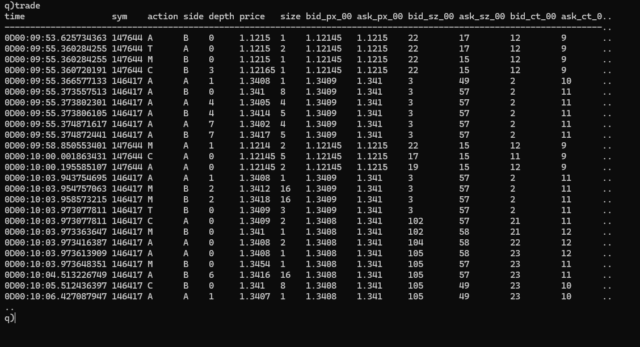

Storing the data in a single table for all symbols has its advantages, but the table would need to be sorted by symbol within your date partitioned database, with a parted attribute applied to the symbol column. This is critical if you want to achieve the best query performance when querying by symbol. The question is, how do you get from your in-memory tickerplant table to that on-disk structure. And how do you manage intraday queries. If you are expecting the data to be very large, then I assume you cannot simply store it all in-memory and persist at EOD. Are you planning on having an intraday writer with some sort of merge process at EOD? Something similar to what is described in the TorQ package (see WDB here: https://github.com/DataIntellectTech/TorQ/blob/master/docs/Processes.md)

This design allows you to partition the data by integer intraday, each integer corresponding to a symbol, and then join all those parts together at EOD to create a symbol sorted table. It works well but it does add complexity.

Getting back to your current setup, you can parallelise the data consumption by creating additional consumers (tickerplants) where you distribute the topic names across consumers. Alternatively, you could have a single “trade” topic in Kafka but with multiple partitions. For example, if you have 2 consumers and 4 partitions, you can parallelise the consumption of messages, with each consumer reading 2 partitions. That distribution of partitions across consumers will be handled automatically by Kafka, which simplifies things. As opposed to you having to specify explicitly which topics consumer 1 should read, as distinct from consumer 2. The single topic, multiple partition setup, also works well in the event where consumer 1 dies unexpectedly, in which case, consumer 2 will automatically take over reading all partitions. Giving a degree of resilience.

I would suggest taking a read of this Blog post from DataIntellect:

https://dataintellect.com/blog/kafka-kdb-java/

In the design presented there, the tickerplant could potentially be removed when you have a Kafka broker in place. The broker is already persisting the data to different partitions (log files) so it provides the recovery mechanism that a tickerplant would usually be responsible for. The subscribers to the tickerplant could simply consume directly from the kafka broker. In this design, your consumers could be intraday writers and RDBs.